|

| | MemoryCopyInstr (Value *src, classid_t src_cid, Value *dest, classid_t dest_cid, Value *src_start, Value *dest_start, Value *length, bool unboxed_inputs, bool can_overlap=true) |

| |

| virtual Representation | RequiredInputRepresentation (intptr_t index) const |

| |

| virtual bool | ComputeCanDeoptimize () const |

| |

| virtual bool | HasUnknownSideEffects () const |

| |

| virtual bool | AttributesEqual (const Instruction &other) const |

| |

| Value * | src () const |

| |

| Value * | dest () const |

| |

| Value * | src_start () const |

| |

| Value * | dest_start () const |

| |

| Value * | length () const |

| |

| classid_t | src_cid () const |

| |

| classid_t | dest_cid () const |

| |

| intptr_t | element_size () const |

| |

| bool | unboxed_inputs () const |

| |

| bool | can_overlap () const |

| |

| virtual Instruction * | Canonicalize (FlowGraph *flow_graph) |

| |

| PRINT_OPERANDS_TO_SUPPORT | DECLARE_ATTRIBUTE (element_size()) |

| |

| DECLARE_INSTRUCTION_SERIALIZABLE_FIELDS(MemoryCopyInstr, TemplateInstruction, FIELD_LIST) private void | EmitUnrolledCopy (FlowGraphCompiler *compiler, Register dest_reg, Register src_reg, intptr_t num_elements, bool reversed) |

| |

| void | PrepareLengthRegForLoop (FlowGraphCompiler *compiler, Register length_reg, compiler::Label *done) |

| |

| void | EmitLoopCopy (FlowGraphCompiler *compiler, Register dest_reg, Register src_reg, Register length_reg, compiler::Label *done, compiler::Label *copy_forwards=nullptr) |

| |

| | DISALLOW_COPY_AND_ASSIGN (MemoryCopyInstr) |

| |

| | TemplateInstruction (intptr_t deopt_id=DeoptId::kNone) |

| |

| | TemplateInstruction (const InstructionSource &source, intptr_t deopt_id=DeoptId::kNone) |

| |

| virtual intptr_t | InputCount () const |

| |

| virtual Value * | InputAt (intptr_t i) const |

| |

| virtual bool | MayThrow () const |

| |

| | Instruction (const InstructionSource &source, intptr_t deopt_id=DeoptId::kNone) |

| |

| | Instruction (intptr_t deopt_id=DeoptId::kNone) |

| |

| virtual | ~Instruction () |

| |

| virtual Tag | tag () const =0 |

| |

| virtual intptr_t | statistics_tag () const |

| |

| intptr_t | deopt_id () const |

| |

| virtual TokenPosition | token_pos () const |

| |

| InstructionSource | source () const |

| |

| virtual intptr_t | InputCount () const =0 |

| |

| virtual Value * | InputAt (intptr_t i) const =0 |

| |

| void | SetInputAt (intptr_t i, Value *value) |

| |

| InputsIterable | inputs () |

| |

| void | UnuseAllInputs () |

| |

| virtual intptr_t | ArgumentCount () const |

| |

| Value * | ArgumentValueAt (intptr_t index) const |

| |

| Definition * | ArgumentAt (intptr_t index) const |

| |

| virtual void | SetMoveArguments (MoveArgumentsArray *move_arguments) |

| |

| virtual MoveArgumentsArray * | GetMoveArguments () const |

| |

| virtual void | ReplaceInputsWithMoveArguments (MoveArgumentsArray *move_arguments) |

| |

| bool | HasMoveArguments () const |

| |

| void | RepairArgumentUsesInEnvironment () const |

| |

| virtual bool | ComputeCanDeoptimize () const =0 |

| |

| virtual bool | ComputeCanDeoptimizeAfterCall () const |

| |

| bool | CanDeoptimize () const |

| |

| virtual void | Accept (InstructionVisitor *visitor)=0 |

| |

| Instruction * | previous () const |

| |

| void | set_previous (Instruction *instr) |

| |

| Instruction * | next () const |

| |

| void | set_next (Instruction *instr) |

| |

| void | LinkTo (Instruction *next) |

| |

| Instruction * | RemoveFromGraph (bool return_previous=true) |

| |

| virtual intptr_t | SuccessorCount () const |

| |

| virtual BlockEntryInstr * | SuccessorAt (intptr_t index) const |

| |

| SuccessorsIterable | successors () const |

| |

| void | Goto (JoinEntryInstr *entry) |

| |

| virtual const char * | DebugName () const =0 |

| |

| void | CheckField (const Field &field) const |

| |

| const char * | ToCString () const |

| |

| | DECLARE_INSTRUCTION_TYPE_CHECK (BlockEntryWithInitialDefs, BlockEntryWithInitialDefs) template< typename T > T *Cast() |

| |

| template<typename T > |

| const T * | Cast () const |

| |

| LocationSummary * | locs () |

| |

| bool | HasLocs () const |

| |

| virtual LocationSummary * | MakeLocationSummary (Zone *zone, bool is_optimizing) const =0 |

| |

| void | InitializeLocationSummary (Zone *zone, bool optimizing) |

| |

| virtual void | EmitNativeCode (FlowGraphCompiler *compiler) |

| |

| Environment * | env () const |

| |

| void | SetEnvironment (Environment *deopt_env) |

| |

| void | RemoveEnvironment () |

| |

| void | ReplaceInEnvironment (Definition *current, Definition *replacement) |

| |

| virtual intptr_t | NumberOfInputsConsumedBeforeCall () const |

| |

| intptr_t | GetPassSpecificId (CompilerPass::Id pass) const |

| |

| void | SetPassSpecificId (CompilerPass::Id pass, intptr_t id) |

| |

| bool | HasPassSpecificId (CompilerPass::Id pass) const |

| |

| bool | HasUnmatchedInputRepresentations () const |

| |

| virtual Representation | RequiredInputRepresentation (intptr_t idx) const |

| |

| SpeculativeMode | SpeculativeModeOfInputs () const |

| |

| virtual SpeculativeMode | SpeculativeModeOfInput (intptr_t index) const |

| |

| virtual Representation | representation () const |

| |

| bool | WasEliminated () const |

| |

| virtual intptr_t | DeoptimizationTarget () const |

| |

| virtual Instruction * | Canonicalize (FlowGraph *flow_graph) |

| |

| void | InsertBefore (Instruction *next) |

| |

| void | InsertAfter (Instruction *prev) |

| |

| Instruction * | AppendInstruction (Instruction *tail) |

| |

| virtual bool | AllowsCSE () const |

| |

| virtual bool | HasUnknownSideEffects () const =0 |

| |

| virtual bool | CanCallDart () const |

| |

| virtual bool | CanTriggerGC () const |

| |

| virtual BlockEntryInstr * | GetBlock () |

| |

| virtual intptr_t | inlining_id () const |

| |

| virtual void | set_inlining_id (intptr_t value) |

| |

| virtual bool | has_inlining_id () const |

| |

| virtual uword | Hash () const |

| |

| bool | Equals (const Instruction &other) const |

| |

| virtual bool | AttributesEqual (const Instruction &other) const |

| |

| void | InheritDeoptTarget (Zone *zone, Instruction *other) |

| |

| bool | NeedsEnvironment () const |

| |

| virtual bool | CanBecomeDeoptimizationTarget () const |

| |

| void | InheritDeoptTargetAfter (FlowGraph *flow_graph, Definition *call, Definition *result) |

| |

| virtual bool | MayThrow () const =0 |

| |

| virtual bool | MayHaveVisibleEffect () const |

| |

| virtual bool | CanEliminate (const BlockEntryInstr *block) const |

| |

| bool | CanEliminate () |

| |

| bool | IsDominatedBy (Instruction *dom) |

| |

| void | ClearEnv () |

| |

| void | Unsupported (FlowGraphCompiler *compiler) |

| |

| virtual bool | UseSharedSlowPathStub (bool is_optimizing) const |

| |

| Location::Kind | RegisterKindForResult () const |

| |

| | ZoneAllocated () |

| |

| void * | operator new (size_t size) |

| |

| void * | operator new (size_t size, Zone *zone) |

| |

| void | operator delete (void *pointer) |

| |

Definition at line 3146 of file il.h.

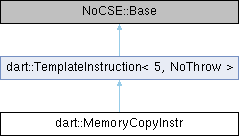

Public Types inherited from dart::TemplateInstruction< 5, NoThrow >

Public Types inherited from dart::TemplateInstruction< 5, NoThrow > Public Types inherited from dart::Instruction

Public Types inherited from dart::Instruction Static Public Member Functions inherited from dart::Instruction

Static Public Member Functions inherited from dart::Instruction Static Public Attributes inherited from dart::Instruction

Static Public Attributes inherited from dart::Instruction Protected Member Functions inherited from dart::Instruction

Protected Member Functions inherited from dart::Instruction Protected Attributes inherited from dart::TemplateInstruction< 5, NoThrow >

Protected Attributes inherited from dart::TemplateInstruction< 5, NoThrow >